When Propaganda Becomes a Weapon Inside the EU’s New Centre for Democratic Resilience

Not long ago, disinformation was treated as background noise in politics – unpleasant, but hardly a national security issue. Today, it is openly discussed alongside cyber-attacks, drone incursions and economic coercion as part of “hybrid warfare.” From fake media websites to AI-generated videos, information itself has become a battlefield.

The European Union’s latest answer to this challenge is the European Centre for Democratic Resilience (ECDR) – a new hub designed to detect, analyse and counter foreign information manipulation across the bloc and its neighbours. The Centre sits at the core of the European Commission’s emerging “European Democracy Shield”, a package of measures to defend elections, independent media and civic space from hostile influence operations – particularly those linked to Russia and China.

This is not just a Brussels bureaucracy story. How the EU chooses to fight disinformation will shape the future of online regulation, influence how platforms behave globally, and set expectations for how democracies respond when propaganda becomes a weapon.

Disinformation as a security threat, not a PR problem

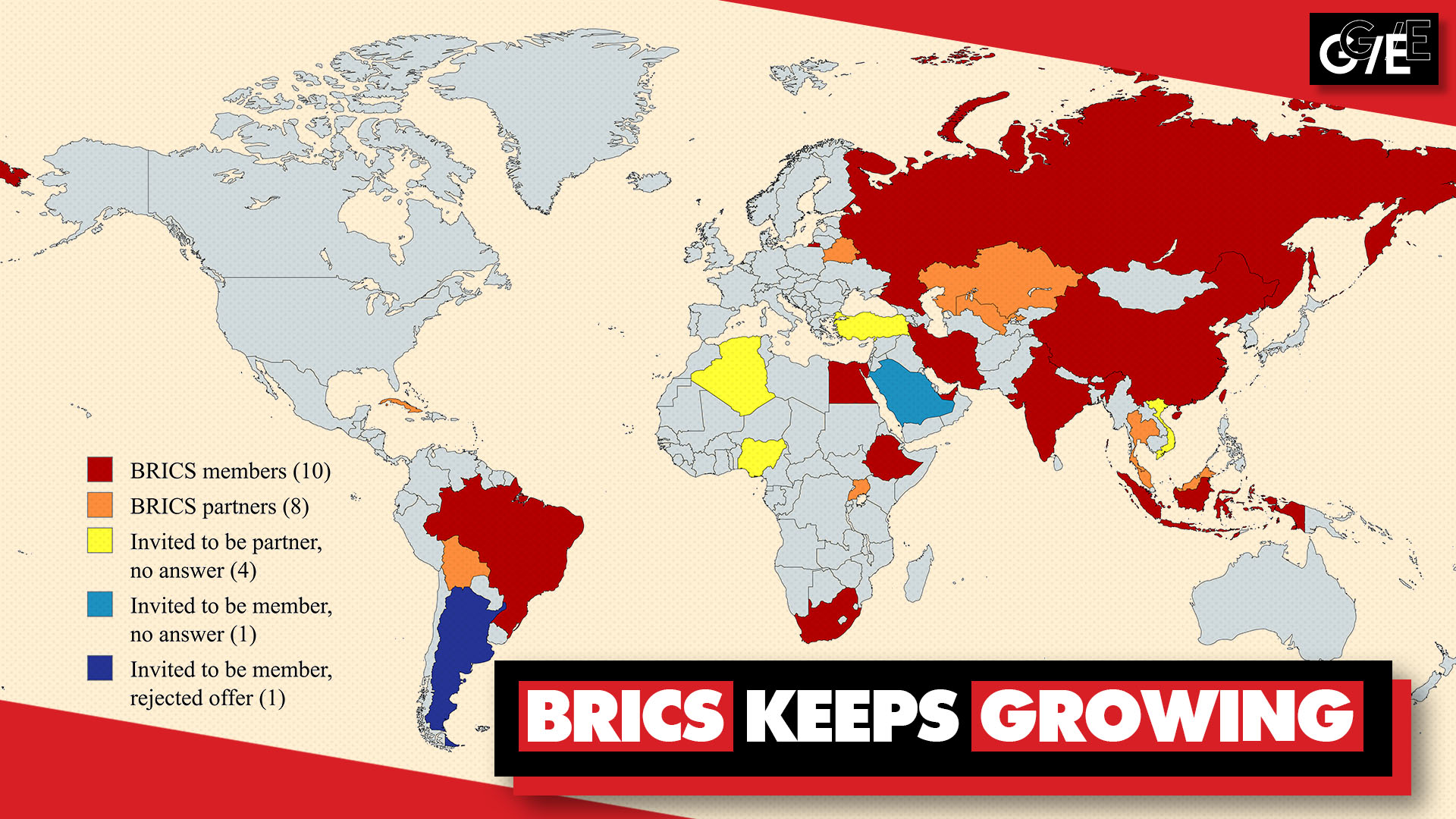

The EU now treats Foreign Information Manipulation and Interference (FIMI) as a strategic threat a deliberate use of information to weaken societies, polarise debates and interfere with elections. The European External Action Service (EEAS) has documented how foreign actors, chiefly Russia and increasingly China, use networks of state media, proxy sites, influencers and bots to erode trust in institutions, undermine support for Ukraine and promote authoritarian narratives.

Recent cases show how serious the stakes have become:

Romania’s 2024 presidential election was annulled after investigators uncovered a sophisticated TikTok-driven influence campaign backing a far-right, pro-Russian candidate, allegedly linked to a covert influencer agency operating from Poland.

In Germany, a Russian-linked campaign tied to the broader “Doppelgänger” operation has used fake news outlets and social content to boost far-right parties and sow doubt about mainstream candidates ahead of key elections.

Drone incursions into EU/NATO airspace – such as a recent incident over Poland – have been followed by online waves of misleading posts blaming Ukraine or alleging “false flags,” showing how physical provocations and digital narratives are now tightly intertwined.

In other words, disinformation is no longer just about “fake news” it is a layer of hybrid conflict that blends military, cyber, economic and informational pressure.

If you’ve already explored how AI is reshaping cyber operations in our piece on AI in cyber warfare, the ECDR can be seen as the political and institutional counterpart to that technological shift.

What is the European Centre for Democratic Resilience?

The European Centre for Democratic Resilience is the flagship institution inside the new European Democracy Shield agenda. According to the Commission’s plans and leaked drafts, it is designed as a hub, not a single “super-agency”:

Pooling expertise across EU member states and candidate countries (such as Ukraine and Western Balkan states).

Coordinating early warnings when influence operations are detected – for example, cloned websites or coordinated bot campaigns around elections.

Supporting rapid responses, including fact-checking networks and communication campaigns.

Working with platforms and tech companies under the EU’s existing Digital Services Act (DSA) rules and disinformation codes of practice.

Crucially, participation is voluntary, but the political expectation is that any country wanting to be part of the EU’s democratic space – or aspiring to join it – will plug into this infrastructure.

The Centre will not start from scratch. It builds on:

The EEAS StratCom units, which have been tracking Russian and Chinese information operations for years.

EU-level policies like the Digital Services Act, which obliges major platforms to assess and mitigate systemic risks such as disinformation.

New rules like the European Media Freedom Act, which aim to safeguard independent journalism and limit political control over media.

Think of the ECDR as the orchestrator of Europe’s disinformation defence: spotting patterns earlier, avoiding duplication between capitals, and ensuring that responses are not purely national because the campaigns targeting voters rarely are.

Why now? Elections, AI and the “Democracy Shield”

The timing is not accidental. The Centre sits inside a broader “Democracy Shield” package unveiled in late 2025, which focuses on three pillars: free and fair elections, independent media and a vibrant civil society.

Several converging trends have forced the EU’s hand:

Election super-cycle. Europe, the U.S. and many key partners are heading through packed electoral calendars. As we discussed in How upcoming U.S. and EU elections are influencing global security strategies, hostile actors see these moments as opportunities to amplify divisions, suppress turnout and question the legitimacy of results.

AI-powered manipulation. Deepfakes, synthetic voices and AI-generated text can now be produced cheaply at scale. The Democracy Shield explicitly calls on major platforms to detect and label AI-manipulated content, and to coordinate under a new “crisis protocol” when large-scale campaigns are detected.

Hybrid pressure from Russia and China. Following Russia’s invasion of Ukraine and rising tensions with China, disinformation is increasingly used alongside energy leverage, cyber-attacks and trade measures. The EU has already sanctioned several Russian entities for coordinated disinformation and FIMI activities.

Fragmented national responses. Until now, some member states had highly developed disinformation units, while others had almost none. That patchwork created vulnerabilities that hostile actors could exploit – for instance, using weaker jurisdictions as springboards for campaigns that then radiated across the EU.

The ECDR is meant to turn that patchwork into a more coherent defensive shield.

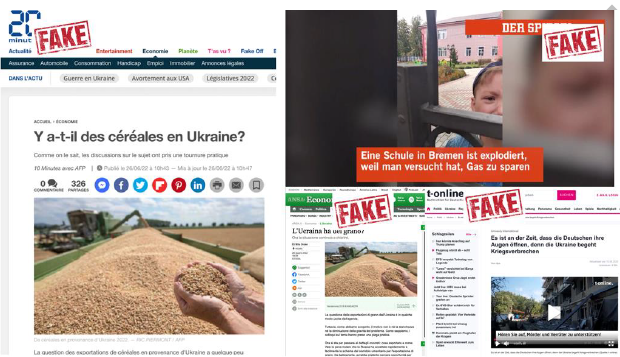

Case study: Operation “Doppelgänger” and the cloning of the news

No discussion of modern disinformation in Europe is complete without Operation “Doppelgänger” – a Russian-linked campaign that illustrates why early warning and coordination are so vital.

How “Doppelgänger” works

Since 2022, investigators have traced a persistent operation that clones legitimate media outlets – including major newspapers and TV channels in France, Germany, Poland, the U.S. and beyond – using near-identical domain names and visual design. These fake sites then publish articles tailored to undermine support for Ukraine, question sanctions, promote far-right narratives or flood debates on issues such as LGBTQ+ rights and migration.

The goal is twofold:

Externally, to erode Western public support for Ukraine and fragment allied cohesion.

Internally, to generate content that can be recycled into Russian-language ecosystems, creating the illusion that “the West itself” is turning against its policies.

The network has used fake Fox News or Washington Post-style sites to push anti-Ukraine talking points, and even leveraged celebrity deepfakes (for example, fabricated quotes attributed to global music stars) to reach apolitical audiences.

Can such campaigns be stopped?

Investigative work by NGOs and media consortia has shown that targeted exposure and cooperation with infrastructure providers can disrupt operations. In 2024, a major European investigation helped identify a Ukrainian-based service provider used by Doppelgänger; once confronted, the provider blocked the customer, significantly slowing the campaign.

Yet Doppelgänger also illustrates a core problem the ECDR is meant to tackle:

Detection often starts with civil society or individual member states.

The campaign quickly shifts infrastructure and tactics once unmasked.

Without swift, EU-wide alerts and a mechanism to brief platforms, the same techniques reappear in another language or country.

By pooling intelligence across borders and linking national authorities, fact-checkers and platforms, the ECDR aims to spot these patterns earlier and respond more coherently.

Russia, China and the evolution of influence operations

While Russia remains the most visible actor behind aggressive disinformation in Europe, the EU increasingly flags China as a structured FIMI actor as well.

Russian playbook

Russia’s tactics in the European information space include:

Long-term narrative building (“sanctions don’t work,” “Western elites are corrupt,” “Ukraine is neo-Nazi”) repeated across state media, proxy sites and social networks.

Election interference, as seen in Romania’s annulled vote or the campaigns targeting German elections.

Hybrid incidents, such as drone incursions or mysterious infrastructure damage, followed by online narratives that confuse attribution and blame domestic elites.

China’s subtler approach

China’s approach in Europe is typically less overtly confrontational but still strategic:

Using PR firms, lobbyists and “friendship” media partnerships to seed pro-Beijing narratives.

Leveraging influencers and social platforms (including TikTok) to shape perceptions around issues like trade, 5G, or Taiwan, as highlighted in EEAS reporting on FIMI.

The ECDR will be tasked with tracking both types of behaviour – from cruder, high-volume troll campaigns to more subtle, long-term reputation shaping.

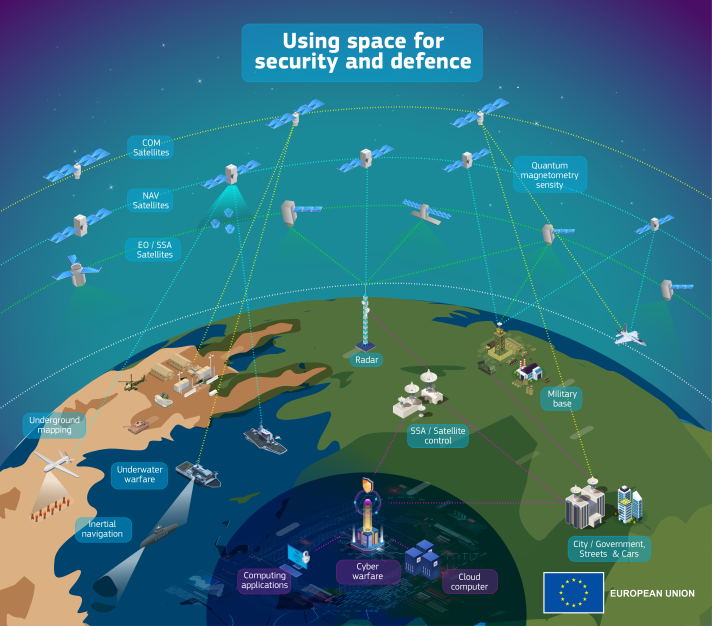

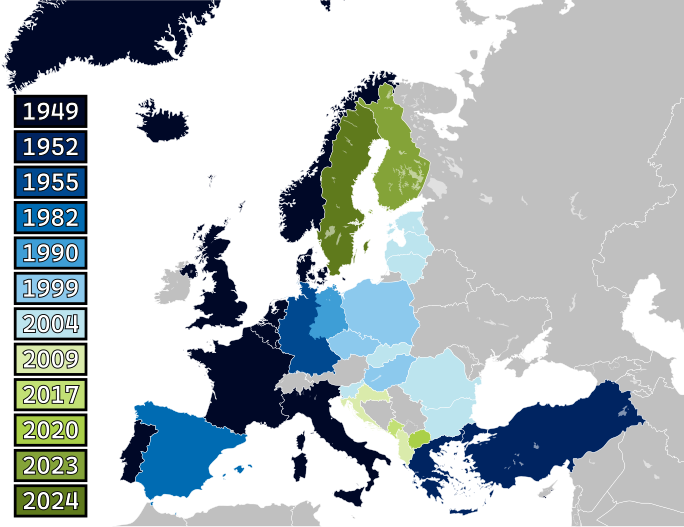

For readers who have followed our exploration of NATO’s expansion and Arctic militarization, it is useful to see these information operations as the “soft edge” of wider strategic competition that also plays out in geography, energy and defence.

How the Centre changes the game for EU member states

For EU governments, the Centre’s creation is both support and pressure.

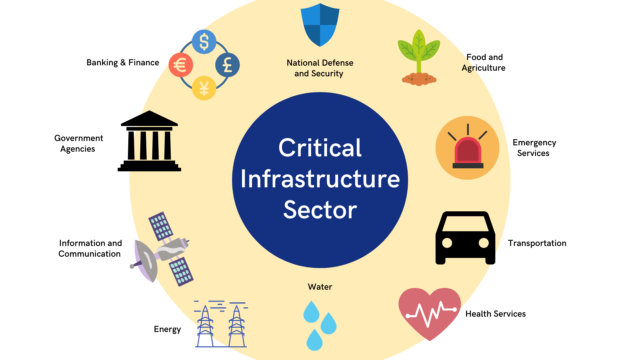

1. Upgrading national cyber and information-security capabilities

Member states will be expected to:

Develop or strengthen national FIMI units capable of feeding data to the ECDR.

Integrate disinformation detection into broader cybersecurity and crisis-management frameworks.

Improve data-sharing and classification rules so that insights can move more easily between intelligence services, regulators and the ECDR.

In practice, this means significant investment in technical monitoring, human analysis and cross-border liaison officers – not just in Brussels, but in national capitals.

2. Scaling media literacy and fact-checking

The Democracy Shield emphasises societal resilience, not just state capacity. That involves:

Funding and networking independent fact-checking organisations.

Integrating media literacy and critical thinking into school curricula.

Supporting public service media and local journalism, which are often first to spot targeted narratives against specific communities.

The ECDR can make this more effective by coordinating best practices between countries and channelling EU funds toward what actually works on the ground – rather than reinventing the wheel 27 times.

3. Navigating civil liberties and political sensitivities

Any EU-level structure dealing with “information” carries political risks:

Who decides which narratives are “foreign interference” and which are legitimate domestic criticism?

How to ensure tools designed for external threats are not used to monitor or stigmatise domestic opposition?

How transparent and accountable will the ECDR be?

These questions are already debated by scholars and civil society, who warn against mission creep and stress the need for robust safeguards, transparency and judicial oversight.

The new expectations for companies and platforms

The Centre does not operate in a vacuum. Much of its impact will depend on how effectively it can coordinate with tech companies, media platforms and advertising ecosystems.

Under the Digital Services Act, the largest platforms must:

Conduct systemic risk assessments regarding disinformation and electoral interference.

Provide data access to regulators and vetted researchers.

Implement mitigation measures, from algorithmic changes to better labelling of manipulated content.

The Democracy Shield and ECDR add political weight – and practical infrastructure – to these obligations:

When the ECDR identifies a major campaign (for example, a Doppelgänger-style clone network), it can feed insights into a DSA crisis protocol, triggering coordinated responses across multiple platforms.

The Commission is also exploring partnerships with influencers and content creators to promote trustworthy information, recognising their role in shaping political discourse, especially among younger voters.

For platforms, this means higher expectations:

Faster reaction to EU-level alerts.

Clearer labelling or removal of coordinated inauthentic behaviour.

Greater scrutiny over ad networks and monetisation systems that may be exploited by foreign actors.

Over time, these standards will likely influence global norms – much as EU privacy rules (GDPR) did. Companies outside Europe may find it easier to adopt ECDR/DSA-compatible policies globally rather than operating completely different rule sets.

What this means for non-EU countries

The Centre’s remit will formally focus on the EU and its candidate countries, but its effects will spill over well beyond Europe.

1. A new anchor for transatlantic and like-minded cooperation

The ECDR can become a natural partner for NATO, the U.S., the UK, Canada, Japan and others who are facing similar influence operations. Shared datasets, joint threat assessments and coordinated messaging could:

Increase the costs for hostile actors, who would face more unified exposure.

Reduce duplication between Western initiatives.

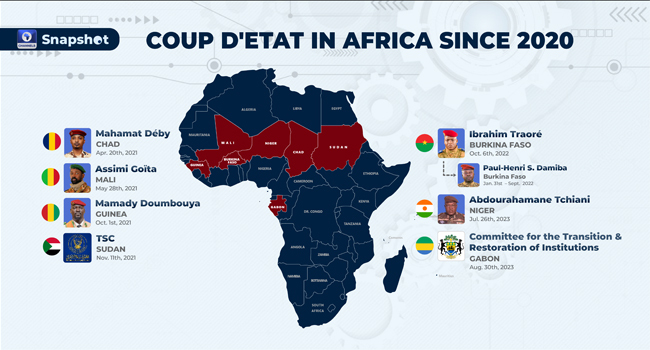

Support partners in regions like the Sahel or Eastern Europe, where disinformation, coups and security crises increasingly intersect – themes we unpacked in African coups and the global response.

2. A model for regulatory “export”

Non-EU democracies may borrow elements of the Democracy Shield:

Specialized centres or task forces modelled on the ECDR.

Legal obligations for platforms inspired by the DSA.

Funding mechanisms for fact-checking and media literacy.

For governments in the Western Balkans, Eastern Partnership countries or even further afield, alignment with ECDR standards could become a way to signal democratic credibility and deepen ties with the EU.

3. Pressure points and friction

Not everyone will welcome this evolution:

Authoritarian states may accuse the EU of information “hegemony” or double standards.

Some democracies may fear that the EU’s regulatory gravity will indirectly affect their own information ecosystem, especially if global platforms harmonise upwards to meet EU expectations.

Still, in a world where disinformation travels at internet speed, isolated national responses are increasingly unrealistic. The ECDR model – if it proves effective and transparent – may be one of the few scalable approaches democracies have.

Key challenges ahead

The Centre is ambitious, but its success is far from guaranteed. Several challenges loom large:

Defining success. How do you measure whether disinformation was “countered”? Fewer viral falsehoods? Higher trust in institutions? Stable voter turnout? The ECDR will need clear metrics that go beyond counting removed posts.

Keeping politics at arm’s length. To maintain legitimacy, the Centre must be perceived as non-partisan – focused on foreign manipulation, not on adjudicating domestic political disputes.

Information overload. Analysts already face a firehose of data. The risk is that the ECDR becomes a repository of unprocessed alerts rather than a strategic brain. Investments in analytical capacity, not just sensors, will be vital.

Staying ahead of AI advances. Adversaries will use generative AI to evolve tactics quickly. The Centre will need to partner with AI labs, academia and civil society to understand and anticipate these shifts.

Protecting fundamental rights. Any measures that touch content moderation, surveillance or data-sharing must be subject to strong legal safeguards, including judicial oversight and meaningful avenues for redress.

From ad-hoc firefighting to a systemic defence

The EU’s proposal for a Centre for Democratic Resilience marks a shift from ad-hoc crisis responses toward a more systemic approach to disinformation as a security issue.

It recognises that propaganda and information manipulation are integral to modern conflict, not a side effect.

It tries to knit together national authorities, EU institutions, platforms and civil society into a single, flexible network.

It sends a message to hostile actors that Europe is no longer content to merely expose their operations after the fact.

For businesses, media and citizens inside and outside the EU, this will gradually reshape expectations:

Platforms will be judged not just by engagement metrics but by their resilience to manipulation.

Governments will be expected to have plans and partnerships in place long before major elections or crises.

Citizens will increasingly be treated not just as voters, but as critical participants in a shared information security environment.

In previous articles like How upcoming U.S. and EU elections are influencing global security strategies and AI in cyber warfare, we’ve argued that the next decade of security will be defined as much by data, narratives and trust as by tanks or missiles. The European Centre for Democratic Resilience is one of the first attempts to build an institution around that reality.

Whether it becomes a genuine shield – or just another acronym in Brussels – will depend on how quickly it can move from concept to practice, how transparent it is willing to be, and how effectively it can work with those who ultimately matter most in any democracy:

The people consuming, sharing and contesting information every day.